Your Move, Claude

LLMs aren't as good at everything as they seem

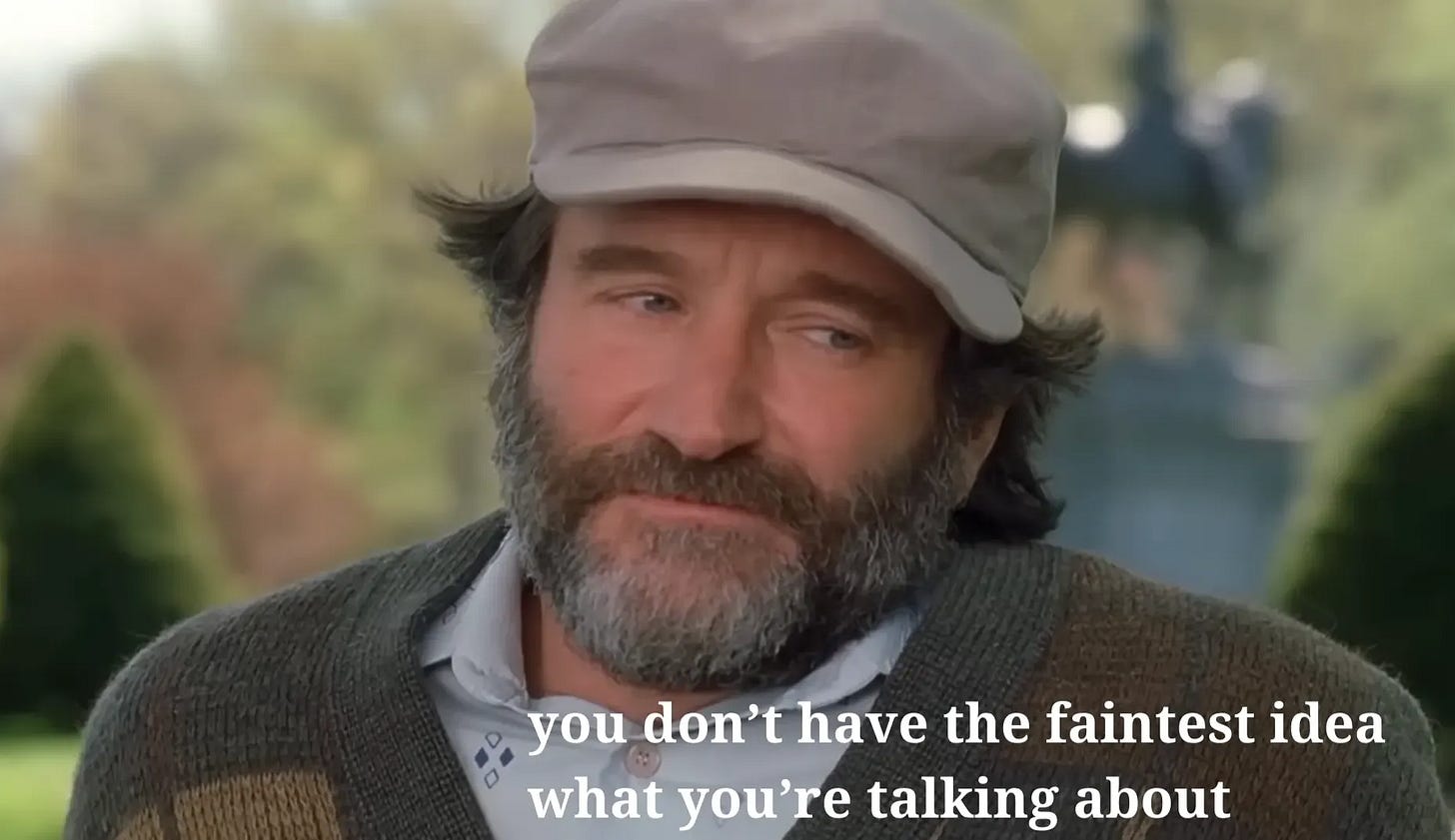

There’s a scene in the film *Good Will Hunting* that I think about a lot in relation to LLMs where a therapist tells the brilliant but flawed young protagonist: I’m not going to listen to you because you don’t know anything. Your knowledge comes from books. Mine comes from decades of real experience. It’s a good scene in a good movie. And it’s strikingly relevant to LLMs’ level of knowledge in 2025 January 2026.

Here Be Dragons

I don’t say this to diminish LLM’s utility or the growth in their capabilities. In the last few years, reasoning, integrated web search, bigger and more powerful models, integrated code execution, etc. the models have so far surpassed the “next token prediction” mental model that they’re basically off the edge of the edge of the world. Off the end of the Mercator projection. Chilling with the dragons on the edge of the map.

In fact, LLMs have gotten so good that it’s actually quite hard to critique them anymore without coming across as a Luddite or out of touch, and I like to think that I am neither. Instead, I think I see that today’s LLMs are so good at some things that they can fool people into thinking they are good at everything, and there’s a systematic way in which LLMs are better at some things than others that we should be aware of.

All of the aforementioned advances smooth over an underlying problem; LLMs’ knowledge is real, their capacity to get more knowledge and summarize and integrate it is real, but this knowledge is systematically incomplete right now (as of January 2026). It frequently fails the vibe check in an important way. It can 1000000% pass the LinkedIn think-fluencer Turing Test (or is it the humans that are passing the reverse Turing there?). But can it actually advise you on how to act in the world to achieve your objectives in complex and specialized situations?

Cordyceps

If you took a person and made their brain run a simple program of 1) asking Opus 4.5 what to do, doing it, observing results, and asking Opus 4.5 what to do next, would that person succeed at attaining their goals? I would argue absolutely not, and that at least half of the problem is that the LLM’s knowledge comes from text [1]. In other words, LLMs have access to legible knowledge derived from text, not tacit knowledge derived from real experiences that aren’t really reducible to text or frequently recorded in texts that have made their way into model training.

Nikhil Krishnan and Adu Subramanian were talking about this tacit knowledge problem with LLMs over a year ago and only recently have I really begun to understand what he meant (I think). LLM heuristics come from summarized, distilled representations of memorized words and textual narratives. An AI model can memorize or just look up the year the Thirty Year’s War started and convincingly (to a non-expert) argue various positions related to that war’s influence on subsequent events. But it has more trouble with questions that pertain to people or specialized and novel situations and circumstances [0].

Hell is Other Non-AIs

The “dealing with people” part is particularly problematic, since many LLMs attempt to take sort of a globally-empathetic and deeply-concerned-with-human-emotion view on things by default, and this view almost always leads them astray. Ask yourself, when the last time Claude or ChatGPT made a “feel” statement that actually made sense and was something a person might actually feel?

Asking ChatGPT what factors led to the outbreak of WWI is going to lead to a much better response than asking it “What do I say in this email to maximize the chance that my client will renew the contract next year?” Claude can tell you what to say in the meeting but it can’t help you come up with a game plan to deal with people’s faces tightening and their smiles thinning as you speak, dead silence following your “any questions” and then everyone going back to their desks and working at cross purposes to your proposal.

If you ask Claude how to make your company successful or deal with a specific thorny problem, it’s drawing on sources of information that are well known and already out there. These facts are part of the conventional wisdom. They’re already priced in, and now, thanks to Claude, nearly everyone has access to them. Since everyone has access to it now, this legible information becomes even less valuable. It’s table stakes.

Hidden Knowledge

But a great deal of valuable information and knowledge is tacit. Secret. Occluded. Not legible. Unwritten. Bound up in NDAs and in the experience of people who have already tried to solve these problems and the lessons they learned that have never been committed to publicly-available text -- or even the private repositories of text drawn from the entire Internet, video transcripts, books, historical artifacts, public datasets, even synthetic text outputs from higher level LLMs or whatever other massive sources of tokens Google/OpenAI/Anthropic can delve into with their very deep pockets.

These are things that the LLM doesn’t know that it doesn’t know, or, if it does know it doesn’t know, almost always discounts the importance of. Of the entire possible universe of things to know - you as an individual will only ever occupy a pretty small space, and many of the things that you “know” are actually wrong, outdated, over-simplified, conditional, or nuanced in ways that actually mean that they don’t apply to the situation that you find yourself in the way you thought they did.

This hole where tacit knowledge should be is even less available to the ‘mind’ of LLM because of RLHF’s emphasis on producing a pleasing and convincing output. This process further obliterates and occludes the importance of missing knowledge from the LLM’s own cognition. Telling a user about its lack of knowledge in important areas or refusing to answer would increase the probability of the “thumbs down” that the entire RLHF process is meant to minimize the likelihood of.

🌭Thoughtdogs (Emulsified Thoughts)

Instead, you’ll get a thoroughly emulsified torrent of (mostly correct) facts and arguments and then a super-confident synthesis and recommended courses of action based on what the model knows or can find on the ever-shrinking and increasingly slop-ified public Internet. Sometimes it will genuflect towards uncertainty, but you won’t get a true sense of epistemic humility or access to hidden or tacit knowledge.

How does this actually show up in LLM-suggested actions? I’d argue it helps explain the following:

Suggestions that sound plausible but won’t actually work due to

<reasons>Hedged suggestions that don’t actually provide a lot of guidance since they rely on the user understanding the situation so well that they wouldn’t actually need guidance in the first place

Recommendations that would only work under a set of common circumstances, but actual circumstances that are different from the one the user is actually in and that the LLM failed to elucidate (and that the user themselves probably doesn’t understand)

Actions that annoy people around the user or damage others’ view of them and make them seem unreliable or out of touch (or reliant on using LLMs for everything)

The exact same action that the median person would try in the situation which already likely has been tried and failed either by the user or someone else, which is why the problem persists

A highly over-confident prescription that has a mixture of all of the above and that still has that lovely LLM flattery that we still struggle with

In other words, often when you ask an LLM what to do instead of “what is” you get a response that merit’s the Good Will Hunting response. “You’re just a [chatbot]. You don’t have the faintest idea what you’re talking about.... I can’t learn anything from you that I can’t read in some...book.”[2

Any awkward turns of phrase, fragments, typos and incorrect punctuation are the result of the human generation process and should be taken as charming proof-of-work that I actually wrote this instead of instructing an LLM to do so.

Thanks to Nikhil Krishnan, Adu Subramanian, and other Out-of-Pocket-ers who helped shape this piece by reading and pushing back on its central thesis.

[0] I am aware of the attempted “study” that violated a bunch of academic integrity codes where LLM apparently could argue convincingly against people on Reddit. However, I think this actually supports my point here rather than refuting it. Convincing forum arguers can be accomplished by rearranging text into new shapes. Actually influencing and acting in the world when people have significant skin in the game and there are groups of players involved not just 1:1s requires much more than that. https://www.science.org/content/article/unethical-ai-research-reddit-under-fire

[1] The other half is due to the input and output mechanism remaining the same as it is now - text inputted by one person’s fallible viewpoint. This context pinhole problem is probably even bigger than the tacit knowledge problem.

[2] Full (and uncensored) text: https://scalar.usc.edu/works/henry-v/transcript-good-will-hunting---your-move-chief

God, thanks for reminding me about that scene in Good Will Hunting (miss Robin Williams) - really encapsulates the lack of perspective that Claude/other LLMs really have. Can't replace actual lived experience.

As an LLM-evangelist, I sometimes veer into the idea of this omniscient being who can productivity-maxx my knowledge, output, and even interactions.

So you make a really good point pulling us down to earth, remembering that LLMs really don't know SHIT when it comes to lived-experience or genuine perspective. Life comes from random events, not trained across all possibilities (like Dr Strange checking all the ways to beat Thanos) - and there's an inherent value in having real experience that limits your other options.

The beauty in life is that you only get one shot at it - ALL of it - and it shapes your perspective in a way that LLMs can't because of its inherent nature of emulsifying everything.